[rev_slider home-ha-noi-animated-1]

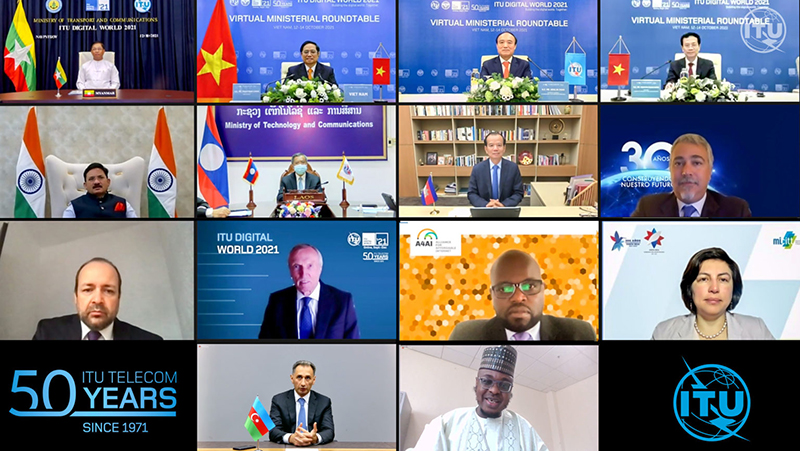

Our events bring together public and private sector leaders, SMEs, academia and experts from around the world to explore the impact of digital technologies and accelerate digital transformation. We bring you world-class content, debates, exhibition and networking opportunities, whatever the format.

Find out more

Global meeting place

Expert, international perspectives

Government and industry leaders

Technology, policy & strategy trends

Universal connectivity

ICTs for development

Event elements

Exhibit

A global exhibition platform for innovative digital solutions, featuring National Pavilions, Industry Stands and tech SMEs

Debate

Expert speakers, industry and government leaders, world-class debate. Exploring the new realities of digital transformation.

Network

Connect with speakers, exhibitors, peers and participants in formal and informal networking sessions and events

Sponsor

Your brand, message and thought-leadership. At the heart of the international ICT community.

SME focus

Supporting tech SMEs across the world – through exhibition workstations, workshops, debates and targeted networking.

More

Awards

Opening the door to opportunity for innovative tech SMEs with real social impact. UN credibility, expert mentoring, visibility and networking in our SME Awards and Masterclasses.

More